Simulation procedure

The proposed Lung Cancer Classification was implemented using PYHTON, precisely “Version 3.7”. The processor utilized was “AMD Ryzen 5 3450U with Radeon Vega Mobile Gfx 2.10 GHz, and the Installed RAM size was 16.0 GB”.

Dataset description

The investigation and validation in this study are supported by the use of two important datasets. As the main source for the whole analytical process, including model construction and performance evaluation, Dataset 1 consists of the LUNA16 dataset33, and Dataset 2 consists of the LIDC-IDRI34 database. The original LIDC-IDRI dataset, which is used exclusively for cross-validation to guarantee the results’ robustness and generalizability, makes up Dataset 2. The study preserves uniformity in data characteristics while boosting the dependability of its conclusions by utilizing LUNA16 for thorough analysis and LIDC-IDRI for validation.

Dataset 1 description

The lung cancer classification was analyzed using LUNA1633. This dataset includes totally 888 CT scans. In this research, we have collected a data from 445 patients, it consists 306 cancer patients and 139 non-cancer patients. Two diverse classes are used, such as Non-Cancer (label 0) and Cancer (label 1). The 2,336 figure most likely reflects a split that occurred during experimentation with 1112 going to one category label 0 (Non-cancer), and 1224 going to another category label 1 (cancer), for a total of 2,336 entries. The sum of 1112 + 1224 = 2,336 is exclusive of the initial 888 scans.

Given that the dataset is reasonably balanced, with class 0 including 1112 samples and class 1 comprising 1224 samples, the problem of class imbalance seems to be negligible. Because of this tiny discrepancy, no sophisticated class balancing strategies like undersampling, oversampling, or the creation of synthetic data (like SMOTE) were needed. The model most likely contributed to dependable classification performance by successfully learning features from both classes without introducing appreciable bias. Table 4 shows the training and testing images. For training (60%), the training images used are 1401, and the testing images used is 935. For training (70%), the training images used is 1635, and the testing images is 701. For training (80%), the training image used is 1868, and the testing image is 468. For training (90%), the training image used is 2102, and the testing image is 234.

Table 4 Training and testing image.

Dataset 2 description (Dataset used for cross validation)

The LIDC-IDRI dataset was evaluated from34. The Lung Image Database Consortium image collection (LIDC-IDRI) includes thoracic computed tomography (CT) scans with marked-up annotated lesions for lung cancer screening and diagnosis. This global resource is available online for the development, instruction, and assessment of computer-assisted diagnostic (CAD) techniques for the identification and diagnosis of lung cancer. The success of a consortium built on a consensus-based process is demonstrated by this public–private partnership, which was started by the National Cancer Institute (NCI), advanced by the Foundation for the National Institutes of Health (FNIH), and actively participated in by the Food and Drug Administration (FDA).

Performance analysis

A comprehensive estimation was presented to evaluate the proposed Lung Cancer Classification method in contradiction to established methodologies. The evaluation compared the CMN-ShuffleNet approach with state-of-the-art techniques like modified VGG1635, DNN 14, AlexNet-SVM18 and ATT-DenseNet16, as well as conventional classifiers such as Gated Recurrent Unit (GRU), LeNet, Bidirectional Long Short-Term Memory (Bi-LSTM), LinkNet and ShuffleNet. We have implemented and compared the state-of-the-art-models and the traditional methods for the LUNA16 dataset. By using the source code, the results are implemented and analyzed. LIDC-IDRI is used for the cross-validation purpose. This extensive examination exploited many performance measures, including “Sensitivity, Negative Predictive Value (NPV), Specificity, F-measure, False Negative Rate (FNR), Precision, False Positive Rate (FPR), Matthews Correlation Coefficient (MCC), and Accuracy” to exhaustively inspect the performance of the CMN-ShuffleNet method. Further, the original images and HE utilizing pre-processed images are displayed in Fig. 7. This procedure is employed to enhance the quality of lung images.

Fig. 7

Pre-processed outcomes (a) Sample Images and (b) HE utilizing pre-processed images.

Segmentation analysis

Segmentation Accuracy: A metric used to determine how closely a measurement’s output matches the expected value or standard is called an accuracy measure. It measures the accurateness of a classification scheme, which is expressed in Eq. (24).

$$ B \right\,\left = \frac{{T_{N} + T_{p} }}{{F_{N} + F_{P} + T_{N} + T_{P} }}$$

(24)

Figure 8 presents sample images alongside their respective segmented findings for BIRCH, Conventional SegNet, U-Net and mRRB-SegNet for lobe segmentation. In this context, the mRRB-SegNet offered exceptional segmented outcomes compared to conventional approaches. This employment of the mRRB layer and the mELS-PReLU activation function in the mRRB-SegNet has the capability to capture long-range dependencies and progress segmentation accuracy.

Fig. 8

Segmented Results (a) Sample Images (b) BIRCH (c) Conventional SegNet (d) U-Net and (e) mRRB-SegNet.

Analysis of gradient pattern

Figure 9 shows the input image and ILGP images, the gradient pattern is discussed in terms of how it can improve feature extraction for the classification of lung cancer. Gradient patterns are essential for detecting minute texture alterations in medical images because they capture local intensity variations and directional changes in pixel values. As previously mentioned, the Improved Local Gradient Pattern (ILGP) approach improves this procedure by focusing on steady directional gradients and reducing noise, producing features that are more resilient and discriminative. Since textural anomalies are frequently subtle but diagnostically significant, this is especially helpful in differentiating between malignant and benign tissues.

Fig. 9

Gradient Pattern Analysis for (a) Input image (b) ILGP.

Analysis on dice, jaccard and segmentation accuracy

Jaccard Index

Calculates the overlap between the ground truth and the projected segmentation in Eq. (25).

$${\text{Jaccard}}\,{\text{Index}} = \frac{{\left| {A \cap B} \right|}}{{\left| {A \cup B} \right|}}$$

(25)

\(A =\) Set of pixels in the ground truth.

\(B =\) Set of pixels in the predicted segmentation.

Dice coefficient

It is a harmonic mean of recall and precision in Eq. (26).

$${\text{Dice}}\,{\text{ coefficient}} = 2\frac{{\left| {A \cap B} \right|}}{\left| A \right| + \left| B \right|}$$

(26)

Table 5 explains the comparative segmentation assessment of mRRB-SegNet to existing segmentation methods, including BIRCH, U-Net and Conventional SegNet for lobe segmentation. While analyzing the segmentation accuracy, the mRRB-SegNet attained the highest score of 0.926, though the traditional approaches recorded lesser values ranging from 0.754 to 0.799. Additionally, the mRRB-SegNet demonstrated a higher Dice rate of 0.911 and Jaccard score of 0.903, whereas the BIRCH, U-Net and Conventional SegNet acquired lesser values. The m-RRB layer in the mRRB-SegNet approach allows for deeper network architectures, and it allows the network to study more complex information features.

Table 5 Dice, Jaccard and Segmentation Accuracy analysis on mRRB-SegNet and Existing Segmentation Methods.

Analysis of feature comparison

A thorough feature comparison analysis of the various approaches used in the lung cancer classification model is shown in Table 6. These approaches include those that only use improved Local Gradient Pattern (LGP), only use shape-based features, do not extract features, and the suggested method that combines both feature types. In every assessed parameter, the suggested approach performs noticeably better than the others. It maintains the lowest false positive rate (FPR: 4.50%) and false negative rate (FNR: 6.10%) while achieving the maximum accuracy (94.66%), sensitivity (93.90%), specificity (95.50%), precision (95.85%), F-measure (94.87%), MCC (0.8932), and NPV (93.39%). On the other hand, performance is decreased when LGP or shape-based features are used only, which is especially noticeable in sensitivity and MCC, indicating that these features are not enough for the best discrimination.

Table 6 Feature comparison analysis.

Comparative analysis

The comparative assessment of the CMN-ShuffleNet approach for lung cancer classification is systematically performed against existing strategies, such as GRU, LeNet, Bi-LSTM, LinkNet, ShuffleNet, modified AlexNet-SVM18 and ATT-DenseNet16. The assessment emphasises on wide-ranging set of performance measures, incorporating Positive, Negative and Neutral measures, which are exposed in Figs. 10, 11 and 12. For an effective lung cancer classification system, the model should attain greater values in positive and neutral measures while sustaining lower values in negative measures. At 60% training data, the CMN-ShuffleNet achieved an accuracy of 0.905, which is notably higher than existing approaches. As the training data increased to 70%, 80% and 90%, the CMN-ShuffleNet continued its lead with accuracies of 0.912, 0.947 and 0.958, respectively. In comparison, the conventional methods like GRU, LeNet, Bi-LSTM, LinkNet, ShuffleNet, modified AlexNet-SVM18, and ATT-DenseNet16 exposed expanding accuracy but did not outperform the CMN-ShuffleNet method’s performance. By 90% training data, the CMN-ShuffleNet approach reached a sensitivity of 0.957, signifying that the CMN-ShuffleNet approach is effective in categorizing lung cancer. In contrast, the conventional methods recorded lesser sensitivity values with GRU at 0.876, LeNet at 0.884, Bi-LSTM at 0.843, LinkNet at 0.901, ShuffleNet at 0.893, modified AlexNet-SVM18 at 0.893 and ATT-DenseNet16 at 0.917, respectively. The ILGP have the ability to accurately identify the small changes in intensities of particular pixels and also increase the discriminative power.

Fig. 10

Positive metric assessment on CMN-ShuffleNet versus existing methods (i) Accuracy (ii) Precision (iii) Sensitivity and (iv) Specificity.

Fig. 11

Negative metric assessment on CMN-ShuffleNet versus existing methods (i) FNR and (ii) FPR.

Fig. 12

Neutral metric assessment on CMN-ShuffleNet versus existing methods (i) F-measure (ii) MCC and (iii) NPV.

The CMN-ShuffleNet accomplishes the supreme NPV of 0.955 in 90% training data, exhibiting its excellent classification performance. In comparison, Bi-LSTM, GRU, LeNet and modified AlexNet-SVM18 acquired the NPVs of 0.836, 0.867, 0.874 and 0.945. These values signify that these models perform well, they do not match the CMN-ShuffleNet model’s capability to precisely classify the lung cancer. ShuffleNet, LinkNet and ATT-DenseNet16 have NPVs of 0.891, 0.896 and 0.940, which also rank below the CMN-ShuffleNet approach. More particularly, the CMN-ShuffleNet model attained an FNR of 0.061 in 80% of the training data, which stands out significantly lower than other methods. While GRU, LeNet, Bi-LSTM, LinkNet, ShuffleNet, modified AlexNet-SVM18, and ATT-DenseNet16 obtained higher FNR values of 0.187, 0.142, 0.167, 0.122, 0.134, 0.125 and 0.104, respectively. Initially, an enhanced M-SegNet (Modified SegNet) model is employed to reduce false positives and increase border detection for accurate lung area segmentation. The computationally efficient Modified ShuffleNet is then trained on both raw image data and gradient patterns and shape-based features to capture more discriminative information. While maintaining the model’s lightweight and adaptability for use in clinical settings with limited resources, this hybrid feature integration enhances classification performance. The mean normalization layer and a convolutional block attention module introduced channel and spatial attention mechanisms, which significantly advanced the classification performance.

Statistical assessment in terms of accuracy

To assess the efficacy of the CMN-ShuffleNet strategy, a statistical analysis is conducted comparing it with existing approaches, such as GRU, LeNet, Bi-LSTM, LinkNet, ShuffleNet, modified AlexNet-SVM18, and ATT-DenseNet16 for Lung cancer classification, are illustrated in Fig. 13. The CMN-ShuffleNet approach attains the greatest accuracy of 0.958 in maximum statistical measure, suggesting its expected ability to reach the greatest level of performance. This score surpasses that of GRU (0.872), Bi-LSTM (0.850), LinkNet (0.906), ShuffleNet (0.915), modified AlexNet-SVM18 (0.950) and ATT-DenseNet16 (0.945), respectively. ILGP produces a smaller threshold value, which helps to capture the changes in discriminative information. For the Mean statistical metric, the CMN-ShuffleNet approach attained the highest accuracy rate of 0.930, whereas GRU, LeNet, Bi-LSTM, LinkNet, ShuffleNet, modified AlexNet-SVM18, and ATT-DenseNet16 accomplished lesser accuracy values. The CBAM module dynamically adjusts its attention based on the features, allowing the network to prioritize regions specific to lung cancer patterns. This leads the CMN-ShuffleNet model to better categorization of cancerous regions.

Fig. 13

Statistical evaluation in terms of accuracy.

Comparison with the state-of-the-art models

Figure 14 shows that the suggested CMN-ShuffleNet model outperforms the baseline models such as VGG16, DNN, AlexNet-SVM, and ATT-DenseNet in the categorization of lung cancer. With the best overall classification efficacy, CMN-ShuffleNet attains the maximum accuracy (94.66%), sensitivity (93.90%), specificity (95.50%), precision (95.85%), and F-measure (94.87%). It also shows the strongest correlation between the actual and predicted classes, with the greatest Matthews Correlation Coefficient (MCC) of 0.8932. To further bolster its dependability, CMN-ShuffleNet also maintains the lowest false positive rate (FPR = 0.045) and a competitively low false negative rate (FNR = 0.061). CMN-ShuffleNet effectively balances specificity and sensitivity, avoiding bias toward either class, in contrast to VGG16 and AlexNet-SVM, which exhibit somewhat lower specificity and sensitivity. With these enhancements, CMN-ShuffleNet is a strong option for precise and effective lung cancer diagnosis, demonstrating the value of combining gradient and shape characteristics with a lightweight yet expressive network structure.

Fig. 14

Analysis of State-of-the-art Comparison Models.

Analysis on individual features

The performance of several feature extraction techniques like Statistical features, shape-based features, conventional local gradient pattern (LGP), and the suggested combined approach within the Modified ShuffleNet framework trained on gradient pattern and shape-based features for lung cancer classification using enhanced M-SegNet segmentation is compared in Fig. 15. With the best accuracy (0.9466), sensitivity (0.9390), specificity (0.9550), precision (0.9585), F-measure (0.9487), and the strongest Matthews Correlation Coefficient (MCC: 0.8932), the suggested approach performs noticeably better than any of the individual feature sets. In addition, it produces the lowest false positive rate (FPR: 0.0450) and false negative rate (FNR: 0.0610), as well as the best negative predictive value (NPV: 0.9339), suggesting a more accurate and balanced categorization. Although statistical features outperform shape-based and LGP features separately, none of the single-feature methods can match the suggested combined feature strategy’s resilience and discriminative capability. This highlights how combining complementing characteristics might improve classification accuracy by capturing both structural and textural subtleties in lung cancer imaging.

Fig. 15

Individual feature analysis.

Ablation study

To evaluate the impact of various components on the performance of the CMN-ShuffleNet approach, an ablation study was conducted. This assessment examined the efficiency of distinct configurations, including CMN-ShuffleNet without preprocessing, CMN-ShuffleNet with existing LGP, CMN-ShuffleNet with existing SegNet, CMN-ShuffleNet with shape-based feature only and CMN-ShuffleNet with statistical feature only, as exposed in Table 7. The CMN-ShuffleNet with existing LGP and existing SegNet accomplished F-measure of 0.901 and 0.889, while the CMN-ShuffleNet with shape-based feature only and CMN-ShuffleNet without pre-processing recorded the F-measure values of 0.889 and 0.890. The CMN-ShuffleNet with statistical features only recorded the F-measure rate of 0.915, showcasing improved performance in lung cancer classification. However, the CMN-ShuffleNet approach demonstrates a higher F-measure of 0.949, revealing its superior performance in accurately categorizing lung cancer. The minimum FPR achieved using the CMN-ShuffleNet is 0.045, exhibiting reduced error values. Conversely, CMN-ShuffleNet without preprocessing, CMN-ShuffleNet with existing LGP, CMN-ShuffleNet with existing SegNet, CMN-ShuffleNet with shape-based feature only and CMN-ShuffleNet with statistical feature only recorded greater FPR values.

Table 7 Ablation Evaluation on CMN-ShuffleNet Strategy, CMN-ShuffleNet without preprocessing, CMN-ShuffleNet with existing LGP, CMN-ShuffleNet with existing SegNet, CMN-ShuffleNet with shape-based feature only and CMN-ShuffleNet with statistical feature only.

Analysis of three-stage feature dimension

The three-stage feature dimension analysis is shown in Table 8. 224 × 224 × 3 images, which are typical of medical imaging standards, are processed by the input layer. Early feature extraction is shown by the first convolutional layer, which doubles the channel depth to 24 and cuts the spatial dimensions in half to 112 × 112. In order to standardize activations and introduce non-linearity without changing dimensions, batch normalization and ReLU activation emerge next. In order to efficiently summarize feature maps while maintaining the channel depth, a max pooling layer further decreases spatial dimensions to 56 × 56. The lightweight yet discriminative design of the modified ShuffleNet, which is tuned for extracting crucial texture and shape signals related to lung cancer detection from segmented regions generated by the improved M-SegNet, is reflected in its small and effective feature dimension progression.

Table 8 Three-stage feature dimension.

Ablation study based on the improved segmentation

Using enhanced M-SegNet segmentation, Table 9 shows an ablation study assessing several activation functions and the effect of eliminating the Residual Refinement Block (RRB) in the suggested Modified ShuffleNet trained on gradient pattern and shape-based features for lung cancer classification. The conventional softplus function performs the best overall among the tested activation functions, obtaining the highest accuracy (0.935), sensitivity (0.934), specificity (0.936), precision (0.940), and F-measure (0.937). It also maintains lower false positive (FPR: 0.064) and false negative (FNR: 0.066) rates.On the other hand, traditional ReLU exhibits the lowest accuracy (0.917) and MCC (0.859), among other metrics. Although it still falls short of softplus, the parametric ReLU (PReLU) outperforms ReLU, exhibiting modest gains in all metrics. Softplus significantly improves model performance in this lung cancer classification framework, highlighting the importance of activation function selection.

Table 9 Analysis of ablation study based on the activation functions.

Analysis of cross-validation

Cross-validation analysis provides a strong framework for evaluating the model’s generalization ability across various data subsets as shown in Table 10. The dataset 1 is used for training, and the dataset 2 is used for training. The LIDC-IDRI dataset is used for cross-validation purpose. The Cross-validation makes sure that the reported performance measures, such an accuracy of 94.79%, sensitivity of 94.68%, and specificity of 94.94%, are not the consequence of overfitting to a specific subset by repeatedly training and testing the model on various dataset partitions. Strong predictive ability and a balance between false positives and false negatives are indicated by the high precision (95.07%) and F-measure (94.87%) values. The model’s dependable performance in binary classification, which takes into consideration both true and erroneous predictions, is further demonstrated by its Matthews Correlation Coefficient (MCC) of 0.9066. Further demonstrating the model’s resilience in differentiating between malignant and non-malignant cases, a crucial diagnostic function in medicine are the low false positive rate (FPR = 5.06%) and false negative rate (FNR = 5.32%). This investigation confirms the efficacy of the suggested deep learning pipeline, which combines sophisticated feature extraction and segmentation techniques for precise lung cancer identification.

Table 10 Cross-validation analysis.

Analysis of K-fold validation

A reliable cross-validation method for evaluating the performance and generalizability of machine learning models is K-fold validation, which is especially useful in medical imaging where data variability is significant. The consistency and dependability of several deep learning models, including the suggested Modified ShuffleNet, trained on gradient pattern and shape-based features taken from lung CT images were assessed in this study using K-fold validation is shown in Table 11. Each model was trained and validated k times using the dataset’s k subsets (folds), with each iteration using a new fold for testing and the remaining folds for training. This method reduces volatility and bias in performance estimation.When combined with improved M-SegNet segmentation and thoughtfully designed input features, the Modified ShuffleNet continuously outperformed conventional architectures like GRU, LeNet, and Bi-LSTM across all five folds shown in Table 11. Using the enhanced M-SegNet segmentation and gradient pattern and shape-based feature training, the suggested CMN-ShuffleNet consistently beat all other models throughout the five folds, attaining the greatest accuracy in each instance (range from 0.9379 to 0.9503). This improved performance demonstrates the value of combining feature-rich representations with a network architecture that is both lightweight and strong. The superior robustness and precision of CMN-ShuffleNet validate its applicability for accurate lung cancer diagnosis.

Table 11 K-fold validation analysis.

Analysis of the Friedman test

The Friedman Test Analysis shown in Table 12 assesses the statistical significance of performance differences among different deep learning architectures. The Friedman test’s p-values show whether observed performance differences are statistically significant or the result of chance. In contrast to CMN-ShuffleNet, models such as ShuffleNet (p = 0.014) and ATT-DenseNet (p = 0.043) exhibit notable differences, indicating that CMN-ShuffleNet offers better or noticeably different performance. However, models like LeNet (p = 0.089) and BiLSTM (p = 0.176) exhibit less statistical differentiation, suggesting similar performance. Overall, the Friedman test demonstrates that CMN-ShuffleNet’s combination of gradient and shape data with M-SegNet’s enhanced segmentation results in statistically significant improvements over a number of conventional models for classifying lung cancer.

Table 12 Friedman test analysis.

Analysis of Wilcoxon test

The Wilcoxon test analysis, which highlights the statistical significance of performance differences, compares CMN-ShuffleNet to other models in Table 13. A non-parametric statistical test called the Wilcoxon test is used to compare matched samples and determine whether there are differences in their population mean rankings. In the context of the modified ShuffleNet trained on gradient pattern and shape-based features for the classification of lung cancer, the Wilcoxon test was utilized to assess the statistical significance of the performance differences between CMN-ShuffleNet and other models. Significantly, CMN-ShuffleNet outperforms GRU (p = 0.004), AlexNet-SVM (p = 0.004), LeNet (p = 0.047), and ATT-DenseNet (p = 0.035) in terms of statistical significance, as seen by their p-values falling below the traditional 0.05 cutoff. This implies that when trained on gradient pattern and shape-based data, the suggested model performs noticeably better than these architectures in lung cancer classification tests. Comparable performance was shown by the comparisons with BiLSTM (p = 0.160), LinkNet (p = 0.190), and Shufflenet (p = 0.138), which did not provide statistically significant differences.

Table 13 Analysis of the Wilcoxon test.

Analysis of T-test value

The suggested CMN-ShuffleNet model’s performance is compared to other comparison models using a statistical technique called a T-test is analyzed in Table 14. The T-test specifically assesses whether the variations in classification accuracy (or other performance measures) between each baseline model and CMN-ShuffleNet are statistically significant or merely the result of chance. The study evaluates whether the enhancements made by the updated ShuffleNet are consistently superior to those of rival models by computing the T-test value, confirming the efficacy of combining gradient pattern and shape-based features in the classification of lung cancer. Other models that displayed a moderate statistical difference, suggesting some degree of performance variation, included LeNet (0.108446), LinkNet (0.194792), ShuffleNet (0.164762), and ATT-DenseNet (0.160142). Conversely, AlexNet-SVM (0.264762) and GRU (0.601421) exhibited comparatively higher T-test values, indicating less significant differences. Overall, these findings demonstrate CMN-ShuffleNet’s efficacy, when compared to the other existing models.

Table 14 T-test analysis.

Analysis of P-test value

The P-test analysis contrasts different deep learning models with the CMN-ShuffleNet in Table 15. A statistical technique called a P-test, or probability test, is used to assess if performance differences between two models are statistically significant or more likely to be the result of chance. While a lower P-value (usually less than 0.05) denotes a substantial difference in classification performance, a higher P-value (near to 1) shows the models perform equally.High P-values, on the other hand, indicate statistically equivalent performance to CMN-ShuffleNet and suggest that models like BiLSTM (0.972), LeNet (0.891), and LinkNet (0.805) may capture similar feature representations or have equal classification efficacy. Although they exhibit greater variability, other models such as AlexNet-SVM (0.635), ShuffleNet (0.735), and ATT-DenseNet (0.739) also exhibit moderate resemblance. These findings demonstrate the competitive standing of CMN-ShuffleNet among existing models.

Table 15 P-test analysis.

Validation of pre-processed image using histogram equalization

The evaluation of pre-processed lung CT images following histogram equalization yields a range of findings using the BRISQUE, NIQE, and PIQE for with and without pre-processing in Fig. 16. Higher quality scores (1.5 to 1.9) in comparison to unprocessed data (scores range from 0.5 to 1.0) show that pre-processing generally improves classification accuracy across the board. Particularly in the context of medical imaging for lung cancer detection, our results emphasize the significance of pre-processing in improving the visual quality of input images, which in turn allows better feature extraction and more precise segmentation and classification. A perceptual quality that is generally excellent after augmentation is indicated by BRISQUE scores between 1.50 and 1.75, but NIQE values between 1.60 and 1.70 indicate a modest decline in naturalness that is model-agnostic but still falls within acceptable quality ranges. Notably, localized regions exhibit minimal perceptual distortion, as seen by the lowest PIQE score of roughly 0.60. Improved segmentation and diagnostic accuracy are made possible by this preprocessing step, which probably helps the Gradient Pattern and Shape-based Modified ShuffleNet pipeline train more robustly.

Fig. 16

Validation of Pre-processed image.

Parametric analysis based on varying learning rate

When the network is trained using gradient pattern and shape-based data taken from lung CT scans, the term “parametric analysis-based learning rate in a modified ShuffleNet” refers to methodically adjusting the learning rate to maximize the training procedure. By enhancing feature representation and stabilizing convergence, this method, when paired with better M-SegNet segmentation, improves the accuracy of lung cancer classification. The performance of the suggested model is shown in Table 16 as a function of varying learning rates. With the highest accuracy (0.946), sensitivity (0.939), specificity (0.954), precision (0.958), F-measure (0.948), and MCC (0.893), as well as the lowest false positive rate (0.045) and false negative rate (0.060), the analysis shows that the learning rate of 0.001 produces the best results . The suggested method’s resilience in managing intricate medical imaging tasks is demonstrated by the steady improvements observed across important assessment criteria, which makes it ideal for reliable and accurate lung cancer diagnosis.

Table 16 Parametric analysis based varying learning rate.

Analysis of training and testing accuracy

There are slight performance reductions from training to testing, indicating high generalization in the training and testing findings. High accuracy (0.9908 train, 0.9584 test) and F1-score (0.9917 train, 0.9593 test) are attained by the model, suggesting steady and equitable performance across classes is shown in Table 17. Strong correlation between the predicted and actual classifications, despite minor variance, is indicated by a Matthews Correlation Coefficient (MCC) of 0.9476 (train) and 0.9167 (test). The robustness of the classifier is further supported by a low false positive rate (FPR) and false negative rate (FNR) in both training (0.0077, 0.0103) and testing (0.0401, 0.0426). These findings collectively imply that the suggested method successfully strikes a balance between sensitivity and specificity, providing a dependable and precise option for automated lung cancer diagnosis.

Table 17 Analysis of training and testing accuracy.

Analysis of training and validation loss

For the classification of lung cancer, the modified ShuffleNet model trained on gradient pattern and shape-based features shows good learning and generalization, as demonstrated by the analysis of training and validation loss in Fig. 17. Both training and validation losses usually show a consistent drop in the loss curves, signifying successful convergence. Better segmentation quality is achieved by integrating upgraded M-SegNet, which also helps to reduce overfitting and validation loss. Both training and validation losses initially begin above 0.12 and then gradually decrease, indicating that the model is learning and convergent. Strong generalization performance and little overfitting are indicated by the training loss approaching 0.09 by epoch 50 and the validation loss stabilizing slightly above it. The two curves’ strong alignment during training indicates that the model is well-supported by the hybrid feature set and precise segmentation offered by M-SegNet, which improves its capacity to identify lung cancer patterns.

Fig. 17

Training and validation loss analysis.

Analysis of ROC

The Receiver Operating Characteristic (ROC) curve plots the true positive rate against the false positive rate at different thresholds to assess the model’s capacity to differentiate between malignant and non-cancerous instances. It offers a thorough understanding of categorization performance, with the system’s overall diagnostic accuracy indicated by the area under the ROC curve (AUC). The efficiency of the Modified ShuffleNet architecture trained on gradient pattern and shape-based features, in conjunction with enhanced M-SegNet segmentation, is highlighted in Fig. 18, which presents the ROC curve analysis comparing many deep learning models for lung cancer classification. The most robust classification performance was demonstrated by CMN-ShuffleNet, which had the greatest AUC of 0.95 among the models studied. The significance of combining context-aware modules with improved feature extraction approaches is highlighted by the useful AUC value of CMN-ShuffleNet, especially in challenging medical imaging applications like lung cancer detection.

Fig. 18

Analysis of confusion matrix

A confusion matrix compares actual labels with expected labels to provide a summary of the prediction results in classification tasks. In the context of the enhanced M-SegNet for segmentation and the Modified ShuffleNet trained on gradient pattern and shape-based features for lung cancer classification, the confusion matrix aids in evaluating the model’s ability to distinguish between malignant and non-cancerous classes is shown in Fig. 19. The confusion matrix reveals great classification performance, with the model correctly recognizing 108 non-cancerous instances (46.15%) and 115 malignant cases (49.15%), demonstrating high accuracy across both groups. Only a minor number of misclassifications occurred, with 6 non-cancerous samples (2.56%) mistakenly forecasted as malignant and 5 cancerous samples (2.14%) misclassified as non-cancerous. This balanced distribution of correct predictions suggests that the model is not biased toward either class and is capable of recognizing small variations between cancerous and non-cancerous CT slices. The low frequency of false positives and false negatives further underlines the model’s robustness, making it suitable for clinical decision support where both sensitivity (detecting cancerous instances) and specificity (properly recognizing non-cancerous cases) are crucial.

Fig. 19

Confusion matrix analysis of the proposed model.

Analysis of computational time

The computational time of several deep learning models utilized in the classification of lung cancer is compared in Table 18, particularly with regard to a Modified ShuffleNet trained on gradient pattern and shape-based features with enhanced M-SegNet segmentation. The models with the quickest computation times among those studied are BiLSTM (29.03 s) and CMN-ShuffleNet (29.15 s), demonstrating their effectiveness in real-time diagnostic applications. Conventional designs with higher latency, such as GRU (53.57 s) and LeNet (50.27 s), are less appropriate for applications that require quick responses. The suggested Modified ShuffleNet outperforms models like LinkNet (41.93 s) and ATT-DenseNet (38.82 s) while retaining superior classification accuracy to its 37.84-s computation time, and the AlexNet-SVM hybrid, speed of 31.68 s. The Modified ShuffleNet’s overall computing efficiency is encouraging, enhancing its usefulness for automated lung cancer diagnosis.

Table 18 Computational time analysis.

Comparison of the proposed model and the state-of-the-art technique

The comparison of the proposed model with prior attempts clearly reveals its higher performance in lung cancer categorization in Table 19. While methods like SVM (94.5% accuracy) and PCA-SMOTE-CNN (90.61% accuracy) offer promising results, they suffer with either poor accuracy or difficulties such as class imbalance, making them less trustworthy in different clinical scenarios. Other models that perform well include CNN and ViTs (98% accuracy) and Sampangi Rama Reddy et al.’s MFDNN (95.4% accuracy). However, the former is constrained by the specificity of the disease type (NSCLC), and the latter requires high-quality data that might not be readily available. Furthermore, the suggested solution employs a lightweight ShuffleNet architecture combined with modified recurrent residual blocks (mRRB), enabling effective segmentation and classification without excessive computational expense. This makes it particularly ideal for real-time clinical application, where both high performance and processing economy are critical. As a result, the suggested approach outperforms the cutting-edge methods indicated and offers a more reliable, effective, and accurate option for lung cancer detection.

Table 19 Comparison of the proposed method and the State of the art Technique.

Critical analysis

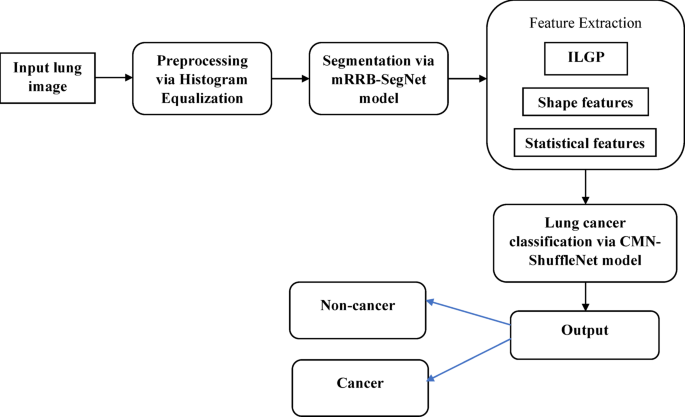

Multiple crucial features of automated diagnosis are addressed by the four-phase method, which includes histogram equalization, mRRB-SegNet-based lobe segmentation, robust feature extraction (including ILGP, shape, and statistical descriptors), and final classification using CMN-ShuffleNet. Though the measurements indicate better performance, the generalizability and the overfitting is entirely avoided. Adding histogram equalization enhances contrast, however it is important to carefully consider how it affects minor pathological traits. Performance evaluation metrics like accuracy, precision, F-measure, MCC (Matthews Correlation Coefficient), NPV (Negative Predictive Value), FPR (False Positive Rate), and FDR (False Discovery Rate) are crucial for evaluating the model’s robustness and dependability across a range of diagnostic dimensions when classifying lung cancer using a Modified ShuffleNet model trained on gradient pattern and shape-based features with enhanced M-SegNet segmentation. Metrics like accuracy, precision, recall, and F1-score have all significantly improved with DenseNet + Attention Mechanism16. By making use of its dense connections, DenseNet facilitates effective feature learning by guaranteeing that all levels have access to information from earlier layers. ATT-DenseNet prioritizes accuracy gains through more intricate networks and attention-based mechanisms, while Modified ShuffleNet with Gradient Features appears to be focused on computing efficiency, which could result in speedier real-time predictions. DenseNet models, however, can be computationally costly, particularly when dealing with bigger datasets. In contrast to the suggested ShuffleNet approach, which is lighter but may not perform as well in terms of accuracy, ATT-DenseNet uses attention to prioritize specific regions of the image, making it perfect for complex jobs where fine-grained concentration is required. The computational time analysis is carried out to ensure the efficiency of the proposed model over the existing methods.

The VGG16 model35 classified lung cancer into three groups with a high accuracy of 98.18%. Being a highly deep model, VGG16 can be computationally expensive, particularly when dealing with big datasets of CT scans. The memory and processing power of VGG16 are significantly higher than those of Modified ShuffleNet. ShuffleNet’s efficiency may make it more appropriate for applications requiring real-time classification with low latency, even though VGG16 delivers superior accuracy. Despite its accuracy, the VGG16 model is computationally demanding and less appropriate for contexts with limited resources. Due to their very small datasets, both models may not be as generalizable in a variety of clinical settings.

The ShuffleNet-based method does not appear to use data augmentation techniques, whereas LCGAN14 solves data scarcity issues by augmenting training with synthetic data. If there is not enough training data, this could lead to possible limits. To increase the model’s resilience and prevent overfitting, the GAN-based method can produce fake data. The ShuffleNet-based method, on the other hand, appears to concentrate on improving the segmentation and feature extraction procedures without using artificial data. Throughout the five folds, the proposed CMN-ShuffleNet continuously outperformed all other models using the improved M-SegNet segmentation and gradient pattern and shape-based feature training, achieving the highest accuracy in each case (range from 0.9379 to 0.9503). This highlights the model’s improved ability to detect lung cancer accurately and reduce missed diagnoses, which is a crucial requirement in clinical decision support systems.

Practical implications

Several significant fields in medical imaging and diagnostics are covered by the real-world uses of a Modified ShuffleNet trained on gradient pattern and shape-based features for lung cancer classification in conjunction with enhanced M-SegNet segmentation. By helping radiologists correctly identify and categorize lung nodules in CT scans, this integrated strategy can be implemented in hospital radiology departments and mobile health units, allowing for an earlier and more accurate diagnosis of lung cancer. Because of its lightweight design, which enables real-time analysis on low-power or embedded devices, it is perfect for applications requiring high-end computing resources, such as point-of-care diagnostics, telemedicine, and rural healthcare. Improved clinical results and more individualized patient care are finally made possible by the precise segmentation provided by M-SegNet, which also facilitates quantitative analysis, surgical planning, and treatment monitoring.