The computational setup for this experiment is as the following specifications:

The experiment is divided into parts: the first part is about active reinforcement deep learning, and the second part uses the extracted traditional features for classification of all labeled datasets by different machine learning algorithms and combination with various deep learning models.

The first part discusses the extensive process of utilizing CoAtNet Architecture of deep learning and ARL techniques to enhance the performance of a medical image classification model. Firstly, the model begins by loading labeled and unlabeled medical images. Labeled data, presumed to have corresponding class labels (such as cancer and non-cancer), is resized to 128 x 128 pixels, and pixel values are normalized. Secondly, an initial CoAtNet model is constructed and trained on the labeled dataset. This model goes through 20 epochs, with a batch size of 64. The initial labeled data was divided into training 70%, validation 15%, and testing 15% subsets. The test set, containing 912 images from 24 patients, was strictly held out at the patient level and was never used at any point in the pipeline—including initial model training, active learning iterations, pseudo-labeling, Q-learning updates, or retraining of the model.

The initial model’s training accuracy, validation accuracy, and AUC score are all recorded. The initial training accuracy was roughly 68%, whereas the validating and testing accuracy were at 67.9%, 67.2% respectively as shown in Table 4 . Furthermore, the original of initial model’s AUC value of 75.1% demonstrates its ability to differentiate between positive and negative situations. Moreover, the precision score of 68.9%, the recall score of 69.8% , F1-score of 69.3%.

The initial model’s training accuracy, validation accuracy, and AUC score are all recorded. The initial training accuracy was roughly 68%, whereas the validating and testing accuracy were at 67.9%, 67.2% respectively as shown in Table 4. Furthermore, the original of initial model’s AUC value of 75.1% demonstrates its ability to differentiate between positive and negative situations. Moreover, the precision score of 68.9%, the recall score of 69.8%, F1-score of 69.3%.

Active Learning (AL) boosts performance by selecting the most informative, uncertain samples, which helps reduce model uncertainty and enhances generalization. However, since AL cannot correct noisy pseudo-labels, its improvement is naturally limited. Even so, it provides a realistic gain, with test accuracy increasing from 67% to 89.1% and AUC rising from 75.1% to 93.5%.

In the performance measurements of the final active reinforcement CoAtNet deep learning model. The model achieves a recall of 98.4%, the precision is high at 99.9% and the F-score is 98.4%. The model’s training accuracy is quite high at 99.8%, indicating its ability to fit the training data. In testing, the model exhibits strong performance with a test accuracy of 99.5%. The AUC is 99.8% which indicates its effectiveness in distinguishing between positive and negative cases.

Table 4 Performance metrics of the initial & final active reinforcement CoAtNet model.

Figure 4 shows the initial model of confusion matrix in which the total number of testing data cases is 912. The confusion matrix in Fig. 5 for the final active reinforcement deep learning model presents a comprehensive view of its classification performance. The model’s predictions are evaluated against actual data. The total number of testing data cases is 912. The values in the matrix reveal that the model accurately identified 456 true negative cases. Additionally, it correctly identified 449 true positive cases. However, it made 7 false negative predictions, and non false positive predictions.

Fig. 4

Confusion matrix of the initial of active reinforcement CoAtNet deep learning.

Fig. 5

Confusion matrix of the final active reinforcement CoAtNet deep learning.

Table 5 shows the result of performance measurement of time of the initial & final model in the Active Reinforcement CoAtNet deep learning which contains 6080 labeled CT image. The results show that the model’s training, validating and testing time is 60.59, 0.259 s, and 0.26 s, respectively. While the training time of the final model is 23400 s, the testing time of all iterations is substantially lower at 142.34 s.

Table 5 Time performance measurement of Initial & Final ARL with CoAtNet Model.

Figure 6 presents the results of performance measurements for each iteration of ARL. It appears that the ARL process aims to improve the model’s performance over iterations by selectively choosing data points for labeling and training. In the initial query (iteration 1), the model demonstrates outstanding performance, achieving the value of accuracy of 73.0%, a precision of 74.1%, a recall of 71.9%, F1-score of 73.9%, and an impressive ROC AUC of 83.9%. After that, in each iteration of active reinforcement learning, it selects a batch of unlabeled data based on the model’s uncertainty (entropy of predictions) and then labels this batch. The goal is to prioritize labeling data that is most likely to improve the model. The labeled data from each iteration is added to the labeled dataset, and the model is retrained on the expanded dataset. Iterations 2, 3, 5, 6, and several others show consistent near-perfect results across all metrics, indicating the model’s ability to maintain a high level of performance as more labeled data is incorporated. Notably, in several iterations, the recall and ROC AUC values remain consistently high, which is indicative of the model’s ability to identify positive instances effectively. Then, various performance metrics are calculated after each iteration. The model maintains high overall performance, but with slight deviations in individual metrics.

In summary of the first experiment, the Active Reinforcement Deep Learning model exhibits significant improvement throughout the iterations. Active Learning combined with Reinforcement Learning (AL + RL) addresses AL’s main limitation by learning an optimal labeling policy that corrects pseudo-label errors and stabilizes uncertainty sampling. This ability to reduce noise explains the larger performance gain from AL to AL+RL, achieving 99.5% test accuracy and 99.8% AUC, making the final improvement both substantial and well justified. Also, as it shows lower testing time making, it proves to be a valuable tool for real-world applications.

Fig. 6

Performance measurement number of iterations in active Reinforcement CoAtNet learning.

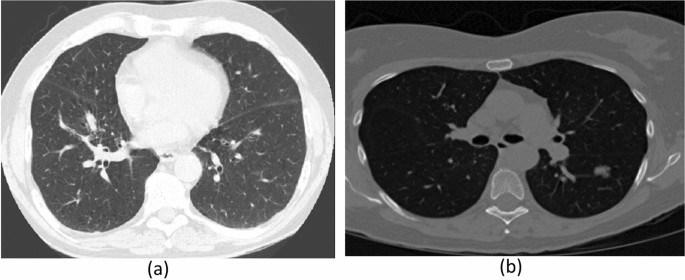

After completion of the initial stage, where all CT images were meticulously labeled using the Active Reinforcement Learning (ARL) framework, all labeled dataset (30020 CT image) progressed to its second stage. This subsequent stage focused on extracting both traditional and deep features to facilitate the rigorous assessment of various machine learning and deep learning models in medical image classification. To ensure the integrity and prevent data leakage, the dataset was strategically partitioned at the patient level into 70% training (21,014 images,553 patients), 15% validation (4503 images,119 patients), and 15% testing (4503 images,118 patients) sets for all models. A crucial aspect of this stage involved a final, strictly held-out test set that remained entirely separate and was not utilized at any point during model development, thereby guaranteeing an unbiased evaluation of the models’ generalization capabilities. This comprehensive stage was executed in three approach:

-

Firslty, Traditional features and classification are retrieved from pre-processed images. Texture features, shape features, intensity-based features, and correlation features are extracted from CT lung images. Next, Use the retrieved conventional characteristics to train and evaluate several classification algorithms such as XGBoost, Random Forest (RF), Decision Tree (DT), and Bayesian Network.

-

Deep feature extraction and Classification uses a simple CNN model with attention fusion features, Simple CoAtNet and Complex CoAtNet with attention fusion feature models which developed for image categorization. Create an appropriate architecture using convolutional layers, pooling layers, fully linked layers, and an output layer. Train the deep learning model using the same data. Key hyperparameters such as the learning rate, optimizer, number of epochs, number of layers, and activation functions were meticulously fixed as shown Table 3. Evaluate the CNN model’s performance on the testing set using performance metrics.

-

Finally, Combined traditional features with deep features and classification Based on a comparative analysis of accuracy and computational efficiency, the simple CNN with attention fusion was identified as the optimal deep learning model for integration with the previously extracted traditional features. The resultant fused model was then subjected to thorough evaluation on the testing set to ascertain the synergistic contribution of combining traditional and deep features. To understand how different feature types and architectural choices affect results, we conducted systematic ablations. We compared models using only traditional features, only deep features, and combined fused features. This analysis highlighted how feature selection methods, attention fusion mechanisms, and the complexity of the network architecture influenced the final classification performance.

First approach, Fig. 7 presents an extensive evaluation of machine learning algorithms for CT lung image for classification by various feature extraction and selection techniques. In the initial combination of 4 types of feature extraction phase after normalization, the XGBoost model achieved 96% training accuracy and 65% testing accuracy, while the Random Forest model demonstrated remarkable performance with 100% training accuracy and 70% testing accuracy. The Bayesian Network and DT models had relatively lower testing accuracy scores. In Forest Features extraction, the XGBoost model showed an improvement, achieving 96% training accuracy and 71% testing accuracy. The Random Forest model maintained its high accuracy, reaching 100% in training and 85% in testing.

After that, applying feature selection methods further refined the models. Using CFS method and FF method, the XGBoost model maintained 99% accuracy training and 67% testing, and the RF model achieved 85% testing accuracy while maintaining its perfect training accuracy. While RFE method, both XGBoost, DT and RF models achieved training accuracy of around 98%, 94% and 100%, respectively. Also, XGBoost, DT and Random Forest models of testing accuracies of around 68%, 68% and 74%, respectively, as shown in Fig. 7

Overall, the Random Forest model consistently outperformed other models in terms of accuracy and achieved impressive results, even after feature selection in CT lung image classification as shown in Table 6.

The consistently extremely low p-values across all metrics—Accuracy, AUC, Precision, Recall, and F1-score—in Table 7 provide strong statistical evidence that RandomForest_FF outperforms all other compared classifiers. Many p-values are effectively zero (for example, \(1.52524\times 10^{-22}\) for Accuracy versus XGBoost_All, and 0.0 for Recall versus Bayes_All and DT_All), which clearly indicates that these performance differences are highly significant and not due to chance. This firmly supports the conclusion that RandomForest_FF is the superior model.

Table 6 Performance measurement with different ML and various of feature extraction & selection.Table 7 Statistical comparison of RandomForest_FF with other classifiers.Fig. 7

The accuracy training and testing and AUC of different classifiers.

Secondly, this step presents a thorough assessment of deep learning models (Simple CNN, simple CNN with attention fusion) in the context of medical image classification. The performance of deep learning models is commonly applied to cancer diagnosis by using 30020 CT image datasets which are: Simple CNN with attention fusion features model, simple CoAtNet model and Complex CoAtNet model. CoAtNet model of deep learning significantly improved the performance of automated diagnostic systems in CT lung images. In this part, the dataset was split into 70% training, 15% for validating, and 15% for testing. The model achieved great results, with an accuracy of 91.1%, AUC reached high results of 97.4%, 93.4% for precision, 88.4% recall and F1-score is 90.8% on testing set. In Addition, the performance of simple CNN with attention fusion feature achieved is 94.1% for accuracy training, 87.4% for validating, 88.1% for testing, 95% for AUC, 88.5% for f1, 92.1% for recall, and 85.2% for precision. On the other hand, the performance of CoAtNet with attention fusion feature achieved 92.88% for train of accuracy is, 89.5% for validating accuracy, 90.1% for testing accuracy, 96% for AUC, 90.2% for f1-score, 90.7% for recall, and 89.6% for precision.

Finally, Simple CNN Model with traditional Features presented impressive performance of training and testing accuracies of 96.7%, and 95.0%, respectively consuming 19794.37 seconds for training and 17 seconds for testing. Second Model, CoAtNet Model with traditional Features, showed the weakness of performance of training accuracy of 79.7% and testing accuracy of 70.5%, in addition to taking a long time in training (89504.5 seconds) and in testing (88.3 seconds) Also, the testing accuracy of combination simple CNN model with attention fusion features is 99.9% as shown in Fig. 8.

Fig. 8

Accuracy training and testing of DL model with traditional features.

In summary, Tables 8 and 9 compare the performance of different deep learning models. Table 8 presents multiple evaluation metrics beyond simple accuracy. Alongside training and test accuracy, it includes ROC-AUC, PR-AUC, precision, recall (sensitivity), specificity, F1-score, exact AUC values, Brier scores for calibration, and memory usage, providing a comprehensive assessment of model reliability and practical deployment feasibility. These metrics indicate that the high performance observed is statistically significant, reliable, and reproducible across important evaluation criteria.

In Fig. 9, Decision Curve Analysis (DCA) was used to assess the net clinical benefit across a range of threshold probabilities. The proposed model consistently demonstrated higher net benefit compared to the other models as well as the treat-all and treat-none strategies, suggesting strong potential for clinical utility.

Table 8 shows the comparison of time of different models and time computation efficiency. The simple CNN with attention fusion model training time is 526.74 sec and testing time is 0.30 sec, which is better and faster compared to the other models.

Table 8 Comparison of performance using various DL models.Table 9 Comparison of time of performance using various DL models.Fig. 9

RocCurve of simple CNN with Atten. Fusion+Trad. features model.

The results in Table 10 show that the performance improvements of CNN_Attn_Trad are highly unlikely to be due to chance. Both paired t-tests and Wilcoxon tests yield extremely small p-values, such as \(1.03 \times 10^{-19}\) and \(1.45 \times 10^{-70}\), confirming that the model’s superiority is statistically significant and consistently reproducible across key evaluation metrics. Furthermore, 95% confidence intervals for ROC_AUC were calculated using 1,000 bootstrap resamples, and the DeLong test confirmed that the proposed model’s ROC_AUC (0.959, 95% CI 0.950–0.968) is significantly higher than that of other models (\(p < 0.001\)), demonstrating that the performance improvement is robust and not due to random chance.

Table 10 Performance statistical comparison of models of pvalues.

Table 11 presents a summary of comparative research studies that have been conducted in the area of employing CT (computerized tomography) imaging to identify efficiency of classification was improved. The table presents the total number of CT images used in each study, along with the accuracy rates annotated dataset size, computational cost (GPU and training/testing time), and interpretability that were achieved for each of those images. Interestingly, the Kaggle Bowl 2017 dataset—which includes a sizable dataset of 2101 patients and 285,380 CT images—was the collective basis for all these investigations.

The suggested ARXAF-Net model in this research stands out with its utilization of a substantially bigger dataset comprising 30,020 CT images and produced accuracy rate of 99.9%. It’s worth noting that, although Table 11 compares ARXAF-Net with previous studies on the Kaggle Bowl 2017 dataset, differences in data splits, pre-processing methods, and task definitions—such as nodule detection versus image-level classification—make it difficult to directly compare the reported performance metrics.

Nonetheless, this achievement illustrates the benefits of utilizing a substantial number of CT images during both the training and evaluation stages of our research. With a larger dataset, we can capture a broader array of lung characteristics, patterns, and variations, enhancing the accuracy of the model. The comparative analysis also underscores the drawbacks of relying on smaller datasets in earlier research, which may fail to represent the full spectrum of imaging scenarios encountered in lung CT scans. Our suggested ARXAF-Net presents several significant benefits.

Our proposed ARXAF-Net offers several important advantages. First, its use of a significantly larger dataset provides better generalization and higher accuracy, as reflected in the 99.9% accuracy achieved. Second, it is computationally efficient, with training taking around 3.3 hours and testing just 1.2 minutes on a single NVIDIA L40S GPU, making it feasible for real-world deployment. Additionally, the RL-based Active Learning strategy helps optimize the annotation process, potentially reducing the manual effort and time required for labeling. Finally, built-in interpretability through Grad-CAM visualizations and RL-based feature importance provides insight into the model’s decision-making, enhancing transparency and trust.

Table 11 Comparison of the proposed model ARXAF-Net with other researches using the Bowl 2017 dataset.